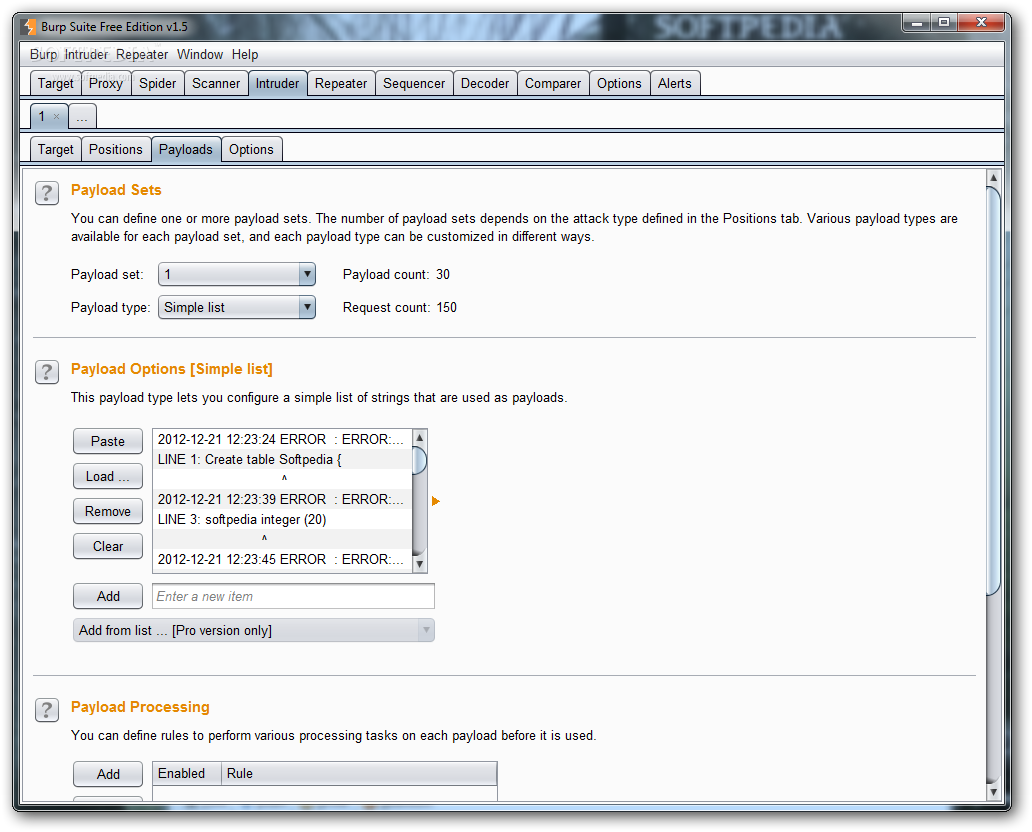

A term significance level is defined as a minimum value of probability that the token will exhibit for a characteristic, such that if the token has a characteristics probability below significance level, the hypothesis that the token is random will be rejected. Then the tokens are tested on certain parameters for certain characteristics. It works like this: initially, it is assumed that the tokens are random. An entropy analyzer tests this hypothesis for being true. This should be achieved both bit-wise and character-wise. Ideally, these tokens must be generated in a fully random manner so that the probability of appearance of each possible character at a position is distributed uniformly. These tokens are generally used for authentication in sensitive operations: cookies and anti-CSRF tokens are examples of such tokens. The sequencer is an entropy checker that checks for the randomness of tokens generated by the webserver. How is CSRF protection being implemented and if there is a way to bypass it?.Among all the cookies present, which one is the actual session cookie.What is the sanitation style being used by the server?.How well the server sanitizes the user-supplied inputs?.Is input sanitation being applied by the server?.How does the server handle unexpected values?.What values is the server expecting in an input parameter/request header?.If user-supplied values are being verified, how well is it being done?.Verifying whether the user-supplied values are being verified. Burp suite wikipedia manual#Repeater lets a user send requests repeatedly with manual modifications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed